Debunking algorithmic qubits

Executive Summary: Quantinuum’s H-Series computers have the highest performance in the industry, verified by multiple widely adopted benchmarks including quantum volume We demonstrate that an alternative benchmark called algorithmic qubits is deeply flawed, hiding computer performance behind a plurality voting trick and gate compilations that are not widely useful.

Recently a new benchmark called algorithmic qubits (AQ) has started to be confused with quantum volume measurements. Quantum volume (QV) was specifically designed to be hard to “game,” however the algorithmic qubits test turns out to be very susceptible to tricks that can make a quantum computer look much better than it actually is. While it is not clear what can be done to fix the algorithmic qubits test, it is already clear that it is much easier to pass than QV and is a poor substitute for measuring performance. It is also important to note that algorithmic qubits are not the same as logical qubits, which are necessary for full fault-tolerant quantum computing.

To make this point clear, we simulated what algorithmic qubits data would look like for two machines, one clearly much higher performing than the other. We applied two tricks that are typically used when sharing algorithmic qubits results: gate compilation and error mitigation with plurality voting. From the data above, you can see how these tricks are misleading without further information. For example, if you compare data from the higher fidelity machine without any compilation or plurality voting (bottom left) to data from the inferior machine with both tricks (top right) you may incorrectly believe the inferior machine is performing better. Unfortunately, this inaccurate and misleading comparison has been made in the past. It is important to note that algorithmic qubits uses a subset of algorithms from a QED-C paper that introduced a suite of application oriented tests and created a repository to test available quantum computers. Importantly, that work explicitly forbids the compilation and error mitigation techniques that are causing the issue here.

As a demonstration of the perils of AQ as a benchmark, we look at data obtained on both Quantinuum’s H2-1 system as well as publicly available data from IonQ’s Forte system.

We reproduce data without any error mitigation from IonQ’s publicly released data in association with a preprint posted to the arXiv, and compare it to data taken on our H2-1 device. Without error mitigation, IonQ Forte achieves an AQ score of 9, whereas Quantinuum H2-1 achieves AQ of 26. Here you can clearly see improved circuit fidelities on the H2-1 device, as one would expect from the higher reported 2Q gate fidelities (average 99.816(5)% for Quantinuum’s H2-1 vs 99.35% for IonQ’s Forte). However, after you apply error mitigation, in this case plurality voting, to both sets of data the picture changes substantially, hiding each underlying computer’s true capabilities.

Here the H2-1 algorithmic performance still exceeds Forte (from the publicly released data), but the perceived gap has been reduced by error mitigation.

“Error mitigation, including plurality voting, may be a useful tool for some near-term quantum computing but it doesn’t work for every problem and it’s unlikely to be scalable to larger systems. In order to achieve the lofty goals of quantum computing we’ll need serious device performance upgrades. If we allow error mitigation in benchmarking it will conflate the error mitigation with the underlying device performance. This will make it hard for users to appreciate actual device improvements that translate to all applications and larger problems,” explained Dr. Charlie Baldwin, a leader in Quantinuum’s benchmarking efforts.

There are other issues with the algorithmic qubits test. The circuits used in the test can be reduced to very easy-to-run circuits with basic quantum circuit compilation that are freely available in packages like pytket. For example, the largest phase estimation and amplitude estimation tests required to pass AQ=32 are specified with 992 and 868 entangling gates respectively but applying pytket optimization reduces the circuits to 141 and 72 entangling gates. This is only possible due to choices in constructing the benchmarks and will not be universally available when using the algorithms in applications. Since AQ reports the precompiled gate counts this also may lead users to expect a machine to be able to run many more entangling gates than what is actually possible on the benchmarked hardware.

What makes a good quantum benchmark? Quantum benchmarking is extremely useful in charting the hardware progress and providing roadmaps for future development. However, quantum benchmarking is an evolving field that is still an open area of research. At Quantinuum we believe in testing the limits of our machine with a variety of different benchmarks to learn as much as possible about the errors present in our system and how they affect different circuits. We are open to working with the larger community on refining benchmarks and creating new ones as the field evolves.

To learn more about the Algorithmic Qubits benchmark and the issues with it, please watch this video where Dr. Charlie Baldwin walks us through the details, starting at 32:40.

About Quantinuum

Quantinuum, the world’s largest integrated quantum company, pioneers powerful quantum computers and advanced software solutions. Quantinuum’s technology drives breakthroughs in materials discovery, cybersecurity, and next-gen quantum AI. With over 500 employees, including 370+ scientists and engineers, Quantinuum leads the quantum computing revolution across continents.

One of the greatest privileges of working directly with the world’s most powerful quantum computer at Quantinuum is building meaningful experiments that convert theory into practice. The privilege becomes even more compelling when considering that our current quantum processor – our H2 system – will soon be enhanced by Helios, a quantum computer a stunning trillion times more powerful, and due for launch in just a few months. The moment has now arrived when we can build a timeline for applications that quantum computing professionals have anticipated for decades and which are experimentally supported.

Background

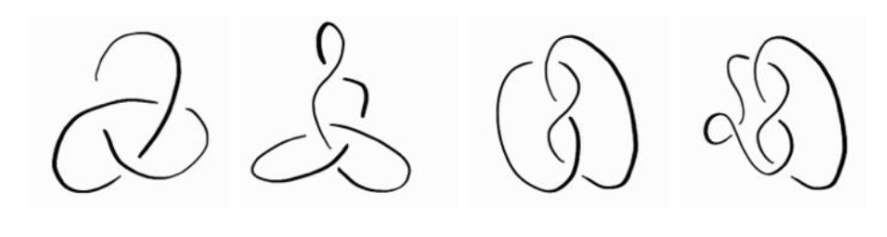

In the 1980s, in the years after Richard Feynman and David Deutsch were working on their initial thoughts around quantum computing, Nicholas Cozzarelli at the University of California, Berkeley, was grappling with a biochemical riddle – “how do enzymes called topoisomerases and recombinases untangle the DNA strands that knot themselves inside cells?”

Cozarelli teamed up with mathematicians including De Witt Sumners, who recognized that these twisted strands could be modelled using the language of knots.

Knot theory’s equations let them deduce how enzymes snipped, flipped and reattached DNA, demystifying processes essential to life. Decoding the knots in DNA proved crucial to designing better antibiotics and in advancing genetic engineering.

Cozzarelli’s team took advantage of the power of knot invariants—polynomial expressions that remain consistent markers of a knot’s identity, no matter how tangled the loops become. This is just one example of how knot theory has been used to solve real-world problems of practical value.

Today, knot theory finds practical uses in fields as diverse as chemistry, robotics, fluid dynamics, and drug design. Measuring the invariants that characterize each knot is a challenge that scales exponentially with the complexity of the knots.

This work shows how a quantum computer can cut through this exponential explosion, indicating that Quantinuum's next-generation systems will offer practical quantum advantage in solving knot theory problems.

In this article, Konstantinos Meichanetzidis, a team leader from Quantinuum’s AI group, explains intriguing and valuable new research into applying quantum computers to addressing problems in knot theory.

Quantifying quantum advantage for knot theory

Quantinuum’s applied quantum algorithms team has published a historic end-to-end algorithm for solving a famous problem in knot theory, via a preprint paper on the arXiv. The research team, led by Konstantinos Meichanetzidis, also included Quantinuum researchers Enrico Rinaldi, Chris Self, Eli Chertkov, Matthew DeCross, David Hayes, Brian Neyenhuis, Marcello Benedetti, and Tuomas Laakkonen of the Massachusetts Institute of Technology.

The project was motivated by building configurable and comprehensive algorithmic tools to pinpoint quantum advantage in practice. This was done by rigorously defining time and error budgets and quantifying both the classical and quantum resource requirements necessary to meet them. Considering realistic quantum and classical processors, they predict that Quantinuum’s forthcoming quantum computers meet those requirements.

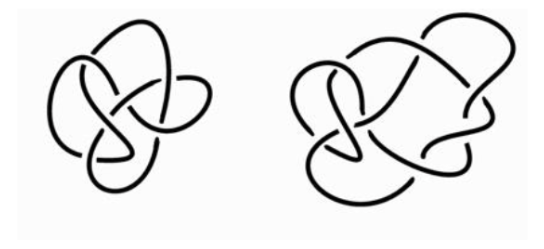

Knot theory is a field of mathematics called ‘low-dimensional topology’, with a rich history, stemming from a wild idea proposed by lord Kelvin, who conjectured that chemical elements are different knots formed by vortices in the ether. Of course, we know today that the ether theory did not hold up under experimental scrutiny, but mathematicians have been classifying and studying knots ever since. Knot theory is intrinsically linked with many aspects of physics. For example, it naturally shows up in certain spin models in statistical mechanics. Today, physical properties of knots are important in understanding the stability of macromolecular structures, from DNA and proteins, to polymers relevant to materials design. Knots find their way into cryptography. Even the magnetohydrodynamical properties of knotted magnetic fields on the surface of the sun are an important indicator of solar activity.

Most importantly for our context, knot theory has fundamental connections to quantum computation, originally outlined by Witten’s work in topological quantum field theory, concerning spacetimes without any notion of distance but only shape. In fact, this connection formed the very motivation for attempting to build topological quantum computers, where anyons – exotic quasiparticles that live in two-dimensional materials – are braided to perform quantum gates.

Konstantinos Meichanetzidis, who led the project, said: “The relation between knot theory and quantum physics is the most beautiful and bizarre fact you have never heard of.”

The fundamental problem in knot theory is distinguishing knots, or more generally, links. To this end, mathematicians have defined link invariants, which serve as ‘fingerprints’ of a link. As there are many equivalent representations of the same link, an invariant, by definition, is the same for all of them. If the invariant is different for two links then they are not equivalent. The specific invariant our team focused on is the Jones polynomial.

The mind-blowing fact here is that any quantum computation corresponds to evaluating the Jones polynomial of some link, as shown by the works of Freedman, Larsen, Kitaev, Wang, Shor, Arad, and Aharonov. It reveals that this abstract mathematical problem is truly quantum native. In particular, the problem our team tackled was estimating the Jones polynomial at the 5th root of unity. This is a well-studied case due to its relation to the infamous Fibonacci anyons, whose braiding is capable of universal quantum computation.

Building and improving on the work of Shor, Aharonov, Landau, Jones, and Kauffman, our team developed an efficient quantum algorithm that works end-to end. That is, given a link, it outputs a highly optimized quantum circuit that is readily executable on our processors and estimates the desired quantity. Furthermore, our team designed problem-tailored error detection and error mitigation strategies to achieve a higher accuracy.

In addition to providing a full pipeline for solving this problem, a major aspect of this work was to use the fact that the Jones polynomial is an invariant to introduce a benchmark for noisy quantum computers. Most importantly, this benchmark is efficiently verifiable, a rare property since for most applications, exponentially costly classical computations are necessary for verification. Given a link whose Jones polynomial is known, the benchmark constructs a large set of topologically equivalent links of varying sizes. In turn, these result in a set of circuits of varying numbers of qubits and gates, all of which should return the same answer. Thus, one can characterize the effect of noise present in a given quantum computer by quantifying the deviation of its output from the known result.

The benchmark introduced in this work allows one to identify the link sizes for which there is exponential quantum advantage in terms of time to solution against the state-of-the-art classical methods. These resource estimates indicate our next processor, Helios, with 96 qubits and at least 99.95% two-qubit gate-fidelity, is extremely close to meeting these requirements. Furthermore, Quantinuum’s hardware roadmap includes even more powerful machines that will come online by the end of the decade. Notably, an advantage in energy consumption emerges for even smaller link sizes. Meanwhile, our teams aim to continue reducing errors through improvements in both hardware and software, thereby moving deeper into quantum advantage territory.

The importance of this work, indeed the uniqueness of this work in the quantum computing sector, is its practical end-to-end approach. The advantage-hunting strategies introduced are transferable to other “quantum-easy classically hard” problems.

Our team’s efforts motivate shifting the focus toward specific problem instances rather than broad problem classes, promoting an engineering-oriented approach to identifying quantum advantage. This involves carefully considering how quantum advantage should be defined and quantified, thereby setting a high standard for quantum advantage in scientific and mathematical domains. And thus making sure we instill confidence in our customers and partners.

Quantinuum and NVIDIA, world leaders in their respective sectors, are combining forces to fast-track commercially scalable quantum supercomputers, further bolstering the announcement Quantinuum made earlier this year about the exciting new potential in Generative Quantum AI.

Make no mistake about it, the global quantum race is on. With over $2 billion raised by companies in 2024 alone, and over 150 new startups in the past five years, quantum computing is no longer restricted to ‘the lab’.

The United Nations proclaimed 2025 as the International Year of Quantum Science and Technology (IYQ), and as we march toward the end of the first quarter, the old maxim that quantum computing is still a decade (or two, or three) away is no longer relevant in today’s world. Governments, commercial enterprises and scientific organizations all stand to benefit from quantum computers, led by those built by Quantinuum.

That is because, amid the flurry of headlines and social media chatter filled with aspirational statements of future ambitions shared by those in the heat of this race, we at Quantinuum continue to lead by example. We demonstrate what that future looks like today, rather than relying solely on slide deck presentations.

Our quantum computers are the most powerful systems in the world. Our H2 system, the only quantum computer that cannot be classically simulated, is years ahead of any other system being developed today. In the coming months, we’ll introduce our customers to Helios, a trillion times more powerful than H2, further extending our lead beyond where the competition is still only planning to be.

At Quantinuum, we have been convinced for years that the impact of quantum computers on the real world will happen earlier than anticipated. However, we have known that impact will be when powerful quantum computers and powerful classical systems work together.

This sort of hybrid ‘supercomputer’ has been referenced a few times in the past few months, and there is, rightly, a sense of excitement about what such an accelerated quantum supercomputer could achieve.

The Power of Hybrid Quantum and Classical Compute

In a revolutionary move on March 18th, 2025, at the GTC AI conference, NVIDIA announced the opening of a world-class accelerated quantum research center with Quantinuum selected as a key founding collaborator to work on projects with NVIDIA at the center.

With details shared in an accompanying press statement and blog post, the NVIDIA Accelerated Quantum Research Center (NVAQC) being built in Boston, Massachusetts, will integrate quantum computers with AI supercomputers to ultimately explore how to build accelerated quantum supercomputers capable of solving some of the world’s most challenging problems. The center will begin operations later this year.

As shared in Quantinuum’s accompanying statement, the center will draw on the NVIDIA CUDA-Q platform, alongside a NVIDIA GB200 NVL72 system containing 576 NVIDIA Blackwell GPUs dedicated to quantum research.

The Role of CUDA-Q in Quantum-Classical Integration

Integrating quantum and classical hardware relies on a platform that can allow researchers and developers to quickly shift context between these two disparate computing paradigms within a single application. NVIDIA CUDA-Q platform will be the entry-point for researchers to exploit the NVAQC quantum-classical integration.

In 2022, Quantinuum became the first company to bring CUDA-Q to its quantum systems, establishing a pioneering collaboration that continues to today. Users of CUDA-Q are currently offered access to Quantinuum’s System H1 QPU and emulator for 90 days.

Quantinuum’s future systems will continue to support the CUDA-Q platform. Furthermore, Quantinuum and NVIDIA are committed to evolving and improving tools for quantum classical integration to take advantage of the latest hardware features, for example, on our upcoming Helios generation.

The Gen-Q-AI Moment

A few weeks ago, we disclosed high level details about an AI system that we refer to as Generative Quantum AI, or GenQAI. We highlighted a timeline between now and the end of this year when the first commercial systems that can accelerate both existing AI and quantum computers.

At a high level, an AI system such as GenQAI will be enhanced by access to information that has not previously been accessible. Information that is generated from a quantum computer that cannot be simulated. This information and its effect can be likened to a powerful microscope that brings accuracy and detail to already powerful LLM’s, bridging the gap from today’s impressive accomplishments towards truly impactful outcomes in areas such as biology and healthcare, material discovery and optimization.

Through the integration of the most powerful in quantum and classical systems, and by enabling tighter integration of AI with quantum computing, the NVAQC will be an enabler for the realization of the accelerated quantum supercomputer needed for GenQAI products and their rapid deployment and exploitation.

Innovating our Roadmap

The NVAQC will foster the tools and innovations needed for fully fault-tolerant quantum computing and will be enabler to the roadmap Quantinuum released last year.

With each new generation of our quantum computing hardware and accompanying stack, we continue to scale compute capabilities through more powerful hardware and advanced features, accelerating the timeline for practical applications. To achieve these advances, we integrate the best CPU and GPU technologies alongside our quantum innovations. Our long-standing collaboration with NVIDIA drives these advancements forward and will be further enriched by the NVAQC.

Here are a couple of examples:

In quantum error correction, error syndromes detected by measuring "ancilla" qubits are sent to a "decoder." The decoder analyzes this information to determine if any corrections are needed. These complex algorithms must be processed quickly and with low latency, requiring advanced CPU and GPU power to calculate and apply corrections keeping logical qubits error-free. Quantinuum has been collaborating with NVIDIA on the development of customized GPU-based decoders which can be coupled with our upcoming Helios system.

In our application space, we recently announced the integration of InQuanto v4.0, the latest version of Quantinuum’s cutting edge computational chemistry platform, with NVIDIA cuQuantum SDK to enable previously inaccessible tensor-network-based methods for large-scale and high-precision quantum chemistry simulations.

Our work with NVIDIA underscores the partnership between quantum computers and classical processors to maximize the speed toward scaled quantum computers. These systems offer error-corrected qubits for operations that accelerate scientific discovery across a wide range of fields, including drug discovery and delivery, financial market applications, and essential condensed matter physics, such as high-temperature superconductivity.

We look forward to sharing details with our partners and bringing meaningful scientific discovery to generate economic growth and sustainable development for all of humankind.

In the rapidly advancing world of quantum computing, to be a leader means not just keeping pace with innovation but driving it forward. It means setting new standards that shape the future of quantum computing performance. A recent independent study comparing 19 quantum processing units (QPUs) on the market today has validated what we’ve long known to be true: Quantinuum’s systems are the undisputed leaders in performance.

The Benchmarking Study

A comprehensive study conducted by a joint team from the Jülich Supercomputing Centre, AIDAS, RWTH Aachen University, and Purdue University compared QPUs from leading companies like IBM, Rigetti, and IonQ, evaluating how well each executed the Quantum Approximate Optimization Algorithm (QAOA), a widely used algorithm that provides a system level measure of performance. After thorough examination, the study concluded that:

“...the performance of quantinuum H1-1 and H2-1 is superior to that of the other QPUs.”

Quantinuum emerged as the clear leader, particularly in full connectivity, the most critical category for solving real-world optimization problems. Full connectivity is a huge comparative advantage, offering more computational power and more flexibility in both error correction and algorithmic design. Our dominance in full connectivity—unattainable for platforms with natively limited connectivity—underscores why we are the partner of choice in quantum computing.

Leading Across the Board

We take benchmarking seriously at Quantinuum. We lead in nearly every industry benchmark, from best-in-class gate fidelities to a 4000x lead in quantum volume, delivering top performance to our customers.

Our Quantum Charged-coupled Device (QCCD) architecture has been the foundation of our success, delivering consistent performance gains year-over-year. Unlike other architectures, QCCD offers all-to-all connectivity, world-record fidelities, and advanced features like real-time decoding. Altogether, it’s clear we have superior performance metrics across the board.

While many claim to be the best, we have the data to prove it. This table breaks down industry benchmarks, using the leading commercial spec for each quantum computing architecture.

These metrics are the key to our success. They demonstrate why Quantinuum is the only company delivering meaningful results to customers at a scale beyond classical simulation limits.

Our progress builds upon a series of Quantinuum’s technology breakthroughs, including the creation of the most reliable and highest-quality logical qubits, as well as solving the key scalability challenge associated with ion-trap quantum computers — culminating in a commercial system with greater than 99.9% two-qubit gate fidelity.

From our groundbreaking progress with System Model H2 to advances in quantum teleportation and solving the wiring problem, we’re taking major steps to tackle the challenges our whole industry faces, like execution speed and circuit depth. Advancements in parallel gate execution, faster ion transport, and high-rate quantum error correction (QEC) are just a few ways we’re maintaining our lead far ahead of the competition.

This commitment to excellence ensures that we not only meet but exceed expectations, setting the bar for reliability, innovation, and transformative quantum solutions.

Onward and Upward

To bring it back to the opening message: to be a leader means not just keeping pace with innovation but driving it forward. It means setting new standards that shape the future of quantum computing performance.

We are just months away from launching Quantinuum’s next generation system, Helios, which will be one trillion times more powerful than H2. By 2027, Quantinuum will launch the industry’s first 100-logical-qubit system, featuring best-in-class error rates, and we are on track to deliver fault-tolerant computation on hundreds of logical qubits by the end of the decade.

The evidence speaks for itself: Quantinuum is setting the standard in quantum computing. Our unrivaled specs, proven performance, and commitment to innovation make us the partner of choice for those serious about unlocking value with quantum computing. Quantinuum is committed to doing the hard work required to continue setting the standard and delivering on our promises. This is Quantinuum. This is leadership.

Dr. Chris Langer is a Fellow, a key inventor and architect for the Quantinuum hardware, and serves as an advisor to the CEO.

_______________________________________