Quantinuum Launches the Most Benchmarked Quantum Computer in the World and Publishes All the Data

New H2-1 shows strong performance across 15 benchmarks while expanding to 32 qubits and reaching a new Quantum Volume record of 65,536

Quantinuum’s new H2-1 quantum computer proves that trapped-ion architecture, which is well-known for achieving outstanding qubit quality and gate fidelity, is also built for scale – and Quantinuum’s benchmarking team has the data to prove it.

The bottom line: the new System Model H2 surpasses the H1 in complexity and qubit capacity while maintaining all the capabilities and fidelities of the previous generation – an astounding accomplishment when developing successive generations of quantum systems.

The newest entry in the H-Series is starting off with 32 qubits whereas H1 started with 10. H1 underwent several upgrades, ultimately reaching a 20-qubit capacity, and H2 is poised to pick up the torch and run with it. Staying true to the ultimate goal of increasing performance, H2 does not simply increase the qubit count but has already achieved a higher Quantum Volume than any other quantum computer ever built: 216 or 65,536.

Most importantly for the growing number of industrials and academic research institutions using the H-Series, benchmarking data shows that none of these hardware changes reduced the high-performance levels achieved by the System Model H1. That’s a key challenge in scaling quantum computers – preserving performance while adding qubits. The error rate on the fully connected circuits is comparable to the H1, even with a significant increase in qubits. Indeed, H2 exceeds H1 in multiple performance metrics: single-qubit gate error, two-qubit gate error, measurement cross talk and SPAM.

Key to the engineering advances made in the second-generation H-Series quantum computer are reductions in the physical resources required per qubit. To get the most out of the quantum charge-coupled device (QCCD) architecture, which the H-Series is built on, the hardware team at Quantinuum introduced a series of component innovations, to eliminate some performance limitations of the first generation in areas such as ion-loading, voltage sources, and delivering high-precision radio signals to control and manipulate ions.

The research paper, “A Race Track Trapped-Ion Quantum Processor,” details all of these engineering advances, and exactly what impacts they have on the computing performance of the machine. The paper includes results from component and system-level benchmarking tests that document the new machine’s capabilities at launch. These benchmarking metrics, combined with the company’s advances in topological qubits, represent a new phase of quantum computing.

Advancing Beyond Classical Simulation

In addition to the expanded capabilities, the new design provides operational efficiencies and a clear growth path.

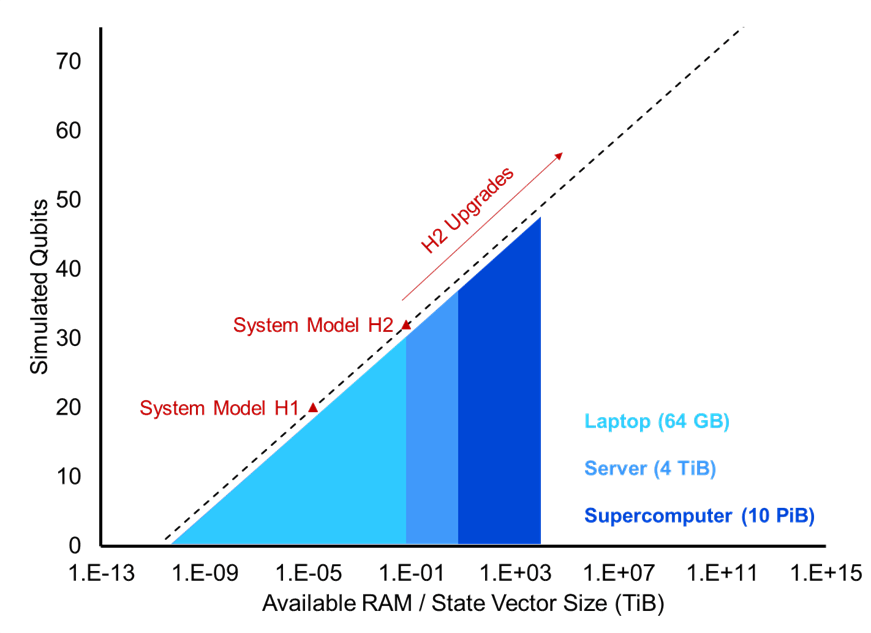

At launch, H2’s operations can still be emulated classically. However, Quantinuum released H2 at a small percentage of its full capacity. This new machine has the ability to upgrade to more qubits and gate zones, pushing it past the level where classical computers can hope to keep up.

Increased Efficiency in New Trap Design

This new generation quantum processor represents the first major trap upgrade in the H-Series. One of the most significant changes is the new oval (or racetrack) shape of the ion trap itself, which allows for a more efficient use of space and electrical control signals.

One key engineering challenge presented by this new design was the ability to route signals beneath the top metal layer of the trap. The hardware team addressed this by using radiofrequency (RF) tunnels. These tunnels allow inner and outer voltage electrodes to be implemented without being directly connected on the top surface of the trap, which is the key to making truly two-dimensional traps that will greatly increase the computational speed of these machines.

The new trap also features voltage “broadcasting,” which saves control signals by tying multiple DC electrodes within the trap to the same external signal. This is accomplished in “conveyor belt” regions on each side of the trap where ions are stored, improving electrode control efficiency by requiring only three voltage signals for 20 wells on each side of the trap.

The other significant component of H2 is the Magneto Optical Trap (MOT) which replaces the effusive atomic oven that H1 used. The MOT reduces the startup time for H2 by cooling the neutral atoms before shooting them at the trap, which will be crucial for very large machines that use large numbers of qubits.

Industry-leading Results from 15 Benchmarking Tests

Quantinuum has always valued transparency and supported its performance claims with publicly available data.

To quantify the impact of these hardware and design improvements, Quantinuum ran 15 tests that measured component operations, overall system performance and application performance. The complete results from the tests are included in the new research paper.

The hardware team ran four system-level benchmark tests that included more complex, multi-qubit circuits to give a broader picture of overall performance. These tests were:

- Mirror benchmarking: A scalable way to benchmark arbitrary quantum circuits.

- Quantum volume: A popular system-level test with a well-established construction that is comparable across gate-based quantum computers.

- Random circuit sampling: A computational task of sampling the output distributions of random quantum circuits.

- Entanglement certification in Greenberger-Horne-Zeilinger (GHZ) states: A demanding test of qubit coherence that is widely measured and reported across a variety of quantum hardware.

H2 showed state-of-the-art performance on each of these system-level tests, but the results of the GHZ test were particularly impressive. The verification of the globally entangled GHZ state requires a relatively high fidelity, which becomes harder and harder to achieve with larger numbers of qubits.

With H2’s 32 qubits and precision control of the environment in the ion trap, Quantinuum researchers were able to achieve an entangled state of 32 qubits with a fidelity of 82.0(7)%, setting a new world record.

In addition to the system level tests, the Quantinuum hardware team ran these component benchmark tests:

- SPAM experiment

- Single-qubit gate randomized benchmarking

- Two-qubit gate randomized benchmarking

- Two-qubit SU gate randomized benchmarking RB

- Two-qubit parameterized gate randomized benchmarking

- Measurement/reset crosstalk benchmarking

- Interleaved transport randomized benchmarking

The paper includes results from those tests as well as results from these application benchmarks:

- Hamiltonian simulation

- Quantum Approximate Optimization Algorithm

- Error correction: repetition code

- Holographic quantum dynamics simulation

About Quantinuum

Quantinuum, the world’s largest integrated quantum company, pioneers powerful quantum computers and advanced software solutions. Quantinuum’s technology drives breakthroughs in materials discovery, cybersecurity, and next-gen quantum AI. With over 500 employees, including 370+ scientists and engineers, Quantinuum leads the quantum computing revolution across continents.

At the heart of quantum computing’s promise lies the ability to solve problems that are fundamentally out of reach for classical computers. One of the most powerful ways to unlock that promise is through a novel approach we call Generative Quantum AI, or GenQAI. A key element of this approach is the Generative Quantum Eigensolver (GQE).

GenQAI is based on a simple but powerful idea: combine the unique capabilities of quantum hardware with the flexibility and intelligence of AI. By using quantum systems to generate data, and then using AI to learn from and guide the generation of more data, we can create a powerful feedback loop that enables breakthroughs in diverse fields.

Unlike classical systems, our quantum processing unit (QPU) produces data that is extremely difficult, if not impossible, to generate classically. That gives us a unique edge: we’re not just feeding an AI more text from the internet; we’re giving it new and valuable data that can’t be obtained anywhere else.

The Search for Ground State Energy

One of the most compelling challenges in quantum chemistry and materials science is computing the properties of a molecule’s ground state. For any given molecule or material, the ground state is its lowest energy configuration. Understanding this state is essential for understanding molecular behavior and designing new drugs or materials.

The problem is that accurately computing this state for anything but the simplest systems is incredibly complicated. You cannot even do it by brute force—testing every possible state and measuring its energy—because the number of quantum states grows as a double-exponential, making this an ineffective solution. This illustrates the need for an intelligent way to search for the ground state energy and other molecular properties.

That’s where GQE comes in. GQE is a methodology that uses data from our quantum computers to train a transformer. The transformer then proposes promising trial quantum circuits; ones likely to prepare states with low energy. You can think of it as an AI-guided search engine for ground states. The novelty is in how our transformer is trained from scratch using data generated on our hardware.

Here's how it works:

- We start with a batch of trial quantum circuits, which are run on our QPU.

- Each circuit prepares a quantum state, and we measure the energy of that state with respect to the Hamiltonian for each one.

- Those measurements are then fed back into a transformer model (the same architecture behind models like GPT-2) to improve its outputs.

- The transformer generates a new distribution of circuits, biased toward ones that are more likely to find lower energy states.

- We sample a new batch from the distribution, run them on the QPU, and repeat.

- The system learns over time, narrowing in on the true ground state.

To test our system, we tackled a benchmark problem: finding the ground state energy of the hydrogen molecule (H₂). This is a problem with a known solution, which allows us to verify that our setup works as intended. As a result, our GQE system successfully found the ground state to within chemical accuracy.

To our knowledge, we’re the first to solve this problem using a combination of a QPU and a transformer, marking the beginning of a new era in computational chemistry.

The Future of Quantum Chemistry

The idea of using a generative model guided by quantum measurements can be extended to a whole class of problems—from combinatorial optimization to materials discovery, and potentially, even drug design.

By combining the power of quantum computing and AI we can unlock their unified full power. Our quantum processors can generate rich data that was previously unobtainable. Then, an AI can learn from that data. Together, they can tackle problems neither could solve alone.

This is just the beginning. We’re already looking at applying GQE to more complex molecules—ones that can’t currently be solved with existing methods, and we’re exploring how this methodology could be extended to real-world use cases. This opens many new doors in chemistry, and we are excited to see what comes next.

Last year, we joined forces with RIKEN, Japan's largest comprehensive research institution, to install our hardware at RIKEN’s campus in Wako, Saitama. This deployment is part of RIKEN’s project to build a quantum-HPC hybrid platform consisting of high-performance computing systems, such as the supercomputer Fugaku and Quantinuum Systems.

Today, a paper published in Physical Review Research marks the first of many breakthroughs coming from this international supercomputing partnership. The team from RIKEN and Quantinuum joined up with researchers from Keio University to show that quantum information can be delocalized (scrambled) using a quantum circuit modeled after periodically driven systems.

"Scrambling" of quantum information happens in many quantum systems, from those found in complex materials to black holes. Understanding information scrambling will help researchers better understand things like thermalization and chaos, both of which have wide reaching implications.

To visualize scrambling, imagine a set of particles (say bits in a memory), where one particle holds specific information that you want to know. As time marches on, the quantum information will spread out across the other bits, making it harder and harder to recover the original information from local (few-bit) measurements.

While many classical techniques exist for studying complex scrambling dynamics, quantum computing has been known as a promising tool for these types of studies, due to its inherently quantum nature and ease with implementing quantum elements like entanglement. The joint team proved that to be true with their latest result, which shows that not only can scrambling states be generated on a quantum computer, but that they behave as expected and are ripe for further study.

Thanks to this new understanding, we now know that the preparation, verification, and application of a scrambling state, a key quantum information state, can be consistently realized using currently available quantum computers. Read the paper here, and read more about our partnership with RIKEN here.

In our increasingly connected, data-driven world, cybersecurity threats are more frequent and sophisticated than ever. To safeguard modern life, government and business leaders are turning to quantum randomness.

What is quantum randomness, and why should you care?

The term to know: quantum random number generators (QRNGs).

QRNGs exploit quantum mechanics to generate truly random numbers, providing the highest level of cryptographic security. This supports, among many things:

- Protection of personal data

- Secure financial transactions

- Safeguarding of sensitive communications

- Prevention of unauthorized access to medical records

Quantum technologies, including QRNGs, could protect up to $1 trillion in digital assets annually, according to a recent report by the World Economic Forum and Accenture.

Which industries will see the most value from quantum randomness?

The World Economic Forum report identifies five industry groups where QRNGs offer high business value and clear commercialization potential within the next few years. Those include:

- Financial services

- Information and communication technology

- Chemicals and advanced materials

- Energy and utilities

- Pharmaceuticals and healthcare

In line with these trends, recent research by The Quantum Insider projects the quantum security market will grow from approximately $0.7 billion today to $10 billion by 2030.

When will quantum randomness reach commercialization?

Quantum randomness is already being deployed commercially:

- Early adopters use our Quantum Origin in data centers and smart devices.

- Amid rising cybersecurity threats, demand is growing in regulated industries and critical infrastructure.

Recognizing the value of QRNGs, the financial services sector is accelerating its path to commercialization.

- Last year, HSBC conducted a pilot combining Quantum Origin and post-quantum cryptography to future-proof gold tokens against “store now, decrypt-later” (SNDL) threats.

- And, just last week, JPMorganChase made headlines by using our quantum computer for the first successful demonstration of certified randomness.

On the basis of the latter achievement, we aim to broaden our cybersecurity portfolio with the addition of a certified randomness product in 2025.

How is quantum randomness being regulated?

The National Institute of Standards and Technology (NIST) defines the cryptographic regulations used in the U.S. and other countries.

- NIST’s SP 800-90B framework assesses the quality of random number generators.

- The framework is part of the FIPS 140 standard, which governs cryptographic systems operations.

- Organizations must comply with FIPS 140 for their cryptographic products to be used in regulated environments.

This week, we announced Quantum Origin received NIST SP 800-90B Entropy Source validation, marking the first software QRNG approved for use in regulated industries.

What does NIST validation mean for our customers?

This means Quantum Origin is now available for high-security cryptographic systems and integrates seamlessly with NIST-approved solutions without requiring recertification.

- Unlike hardware QRNGs, Quantum Origin requires no network connectivity, making it ideal for air-gapped systems.

- For federal agencies, it complements our "U.S. Made" designation, easing deployment in critical infrastructure.

- It adds further value for customers building hardware security modules, firewalls, PKIs, and IoT devices.

The NIST validation, combined with our peer-reviewed papers, further establishes Quantum Origin as the leading QRNG on the market.

--

It is paramount for governments, commercial enterprises, and critical infrastructure to stay ahead of evolving cybersecurity threats to maintain societal and economic security.

Quantinuum delivers the highest quality quantum randomness, enabling our customers to confront the most advanced cybersecurity challenges present today.